Agent delegation :

your dev agent delegates the test

Agent delegation lets your dev agent finish a feature and hand the validation off to a separate QA agent. The dev keeps shipping code with the model you trust on hard problems. The QA agent runs the test on a cheaper model. Both talk through the AgentsRoom MCP servers, so agent delegation works end to end without you copying anything around.

You stop paying Opus prices for browser clicks. You stop bloating your dev agent's context with screenshots and DOM dumps. Agent delegation routes each task to the right model at the right price, and when the QA agent is done, it pings the dev agent back so the loop closes on its own.

Agent delegation in action : the Codex dev agent finishes the feature, calls run_qa_test, the QA agent opens the browser on a cheaper model and reports back.

Here is the problem agent delegation solves. You run a strong dev agent (Claude Opus, Codex, the kind of model that designs an API or refactors a store). The agent ships the feature in 10 minutes. Then it spends the next 8 minutes clicking around a browser to verify the feature works. Same expensive token rate. Same model that was thinking hard about your domain logic, now reading button labels.

Agent delegation fixes that. When the feature is done, the dev agent calls a single MCP tool, run_qa_test, with a scenario. AgentsRoom spawns an ephemeral QA agent on the model you picked for QA : Claude Haiku, Codex mini, GPT-4 mini, anything you want. The QA agent gets the AgentsRoom Browser MCP, drives the page, asserts the result, and replies with a verdict. The dev agent reads the verdict and moves on.

That is agent delegation, and that is the only loop the page covers. One dev, one QA, one MCP. Same idea as a senior engineer delegating regression testing to a junior or to QA : the senior keeps designing, the junior runs the checklist. Agent delegation gives you that same split between models.

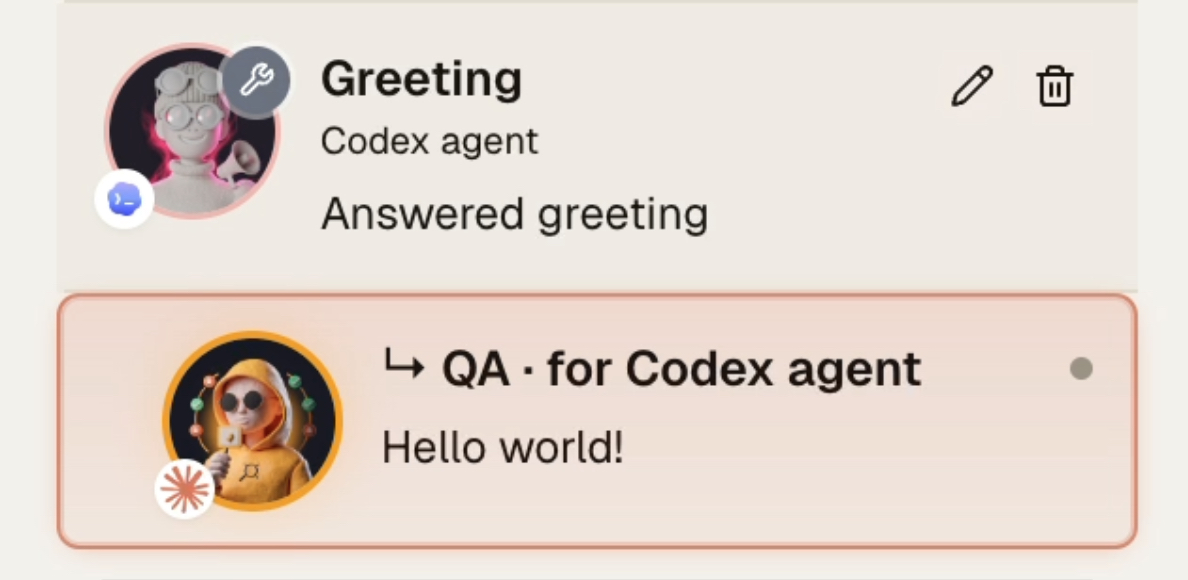

Agent delegation visualized : the parent dev agent (Codex) and the child QA agent (Claude) appear in the same agent list, with a clear dev to QA handoff.

Why agent delegation is worth wiring in

First, money. A test pass on Claude Opus and a test pass on Claude Haiku cost vastly different amounts. Same browser, same assertions, same screenshots. Agent delegation lets the cheap model do the cheap work. People who turned this on report dropping their token bill on QA-heavy days by a real, measurable factor, not by 5 to 10 percent.

Second, context. When a dev agent runs the test itself, every screenshot, every DOM dump, every console log ends up in the dev agent's context window. Twenty minutes of clicking is megabytes of noise the dev agent has to carry through the rest of the session. Agent delegation isolates that noise inside the ephemeral QA agent. The dev agent gets a clean 'pass' or 'fail' message back, nothing else.

Third, the ecological angle. Every agent delegation saves real compute. Running Haiku where Opus was running halves the energy footprint on that step. Multiply by everyone in the team and by every test loop in a year and agent delegation becomes a non-trivial knob on the carbon side of your stack.

Fourth, reliability. A dev agent that drives the browser itself tends to wander. Two screenshots in, it forgets what it was trying to validate. The QA agent in agent delegation has one job and one prompt. It tests, it reports, it dies. The loop is short, predictable and easy to debug.

The only flow agent delegation covers here

One dev agent. One QA agent. One MCP call. Agent delegation, end to end.

Dev agent ships the feature

Your dev agent (Claude Opus, Codex high reasoning, whichever expensive model you trust) finishes the implementation. New endpoint, new screen, new flow. Code is written, files are saved.

Dev agent calls run_qa_test

Instead of opening the browser itself, the dev agent calls a single MCP tool from the AgentsRoom Test Runner server : run_qa_test, with a plain English scenario. That is the entire agent delegation API surface.

AgentsRoom spawns the QA agent

AgentsRoom Test Runner spawns an ephemeral QA agent on the cheaper model you configured (Claude Haiku, Codex mini, GPT-4 mini). The QA agent gets the AgentsRoom Browser MCP tools : navigate, click, type, screenshot, evaluate, get_logs, get_state.

QA agent runs the test

The QA agent opens the page, walks through the scenario, asserts the result, captures screenshots if needed and reads the console logs to catch the runtime errors a dev agent would have missed.

QA agent submits the verdict

When done, the QA agent calls submit_verdict with a pass, fail or inconclusive result and a short summary. Screenshots and logs are attached. The QA agent process is destroyed. Its context window goes with it.

Dev agent reads the verdict and moves on

The dev agent receives the verdict back as the response to run_qa_test. On pass, the dev agent commits or moves to the next ticket. On fail, the dev agent reads the failure summary, fixes the bug and triggers a new agent delegation cycle. The loop closes by itself.

The economics of agent delegation

Why a smart dev to QA split lowers your AI bill without lowering your standards.

Browser tests are repetitive. Open the page, click the button, read the label, check the toast. A 50 dollar per million tokens model does that work as well as a 3 dollar per million tokens model. Maybe better, because the cheap model is not bored. Agent delegation puts the cheap model on the boring half of the job.

Real numbers from real sessions : a typical end to end test on a complex flow burns 60k to 200k tokens between screenshots, DOM dumps and reasoning steps. On Opus, that is real money per test. On Haiku, that is loose change. Agent delegation turns a daily QA habit from a budget concern into a free reflex.

Multiply by every loop. A normal dev day on a non trivial feature runs the test five to twenty times. Agent delegation compounds across those reps. The dev agent stays expensive (you want it expensive), the QA agent stays cheap, and the gap is pure savings.

Agent delegation is also kinder to the planet. Less compute on the same job means less energy, less water in the datacenter, less carbon. Not the only reason to wire agent delegation in, but a fair side effect of routing tasks to right-sized models.

A real model split for agent delegation

What people actually plug into the dev side and the QA side of agent delegation.

Dev side (kept expensive on purpose)

- Claude Opus 4.7

- Claude Sonnet 4.6

- Codex high reasoning

- GPT-4 with deep reasoning

- Gemini 2.5 Pro

QA side (delegated to cheaper)

- Claude Haiku 4

- Claude Sonnet 4 (low effort)

- Codex mini

- GPT-4 mini

- Gemini 2.5 Flash

Agent delegation does not lock the matrix. You configure the QA model per project. You can even agent-delegate to a totally different provider : Opus on dev, Codex mini on QA, no shared context, just an MCP call.

What agent delegation actually does under the hood

Agent delegation sits on the AgentsRoom MCP stack. The dev agent runs inside its CLI (Claude Code, Codex, Gemini, OpenCode, Aider). AgentsRoom injects the Test Runner MCP server into that agent. The Test Runner exposes one tool : run_qa_test. That is the entry point of every agent delegation call.

When run_qa_test fires, AgentsRoom spawns a new CLI process in the same project, with a different config. That config has the Browser MCP attached, the QA system prompt attached, and the model swapped to whatever you set on the QA side. The new process is an ephemeral QA agent : it lives for the duration of the test and dies after submit_verdict.

While the QA agent runs, the dev agent is paused on the run_qa_test call. AgentsRoom shows the QA agent in the same agent list, indented under the dev agent (visible in the image above). When the QA agent finishes, its verdict is returned as the run_qa_test result and the dev agent resumes. Agent delegation is a single MCP round trip from the dev agent's point of view.

The dev agent never gets the browser tools. AgentsRoom strips the browser_* tools from the dev agent's allowed list at spawn time. That is the part that makes agent delegation reliable : the dev agent cannot fall back to doing the test itself, even when its instinct is to grab a screenshot. The only path forward is run_qa_test. Agent delegation by removal, not by request.

Where agent delegation runs today, and where next

Agent delegation in AgentsRoom is browser-first today. Same shape, more surfaces coming.

Today : browser test delegation

The QA agent drives the AgentsRoom embedded browser through the Browser MCP. Localhost dev server, public preview tunnel, staging URL, anything Chromium can render. Forms, modals, drag and drop, dialogs, console logs, network errors. Agent delegation covers the full surface a web QA engineer would cover.

Electron app test delegation

If you ship an Electron app yourself, you can install the AgentsRoom Electron MCP library in your project. The QA agent connects to your Electron app the same way it connects to a Chromium tab. Agent delegation crosses into desktop app testing without changing the dev side at all.

React Native app test delegation (roadmap)

The same agent delegation shape is coming to React Native. The QA agent will drive an iOS or Android simulator through an AgentsRoom React Native MCP. Dev agent ships a screen, QA agent taps through it. Same run_qa_test call, same dev to QA handoff, mobile target.

Without agent delegation vs with agent delegation

Same feature, same QA pass. Different bill, different context, different reliability.

Without agent delegation

- : The dev agent (expensive) opens the browser itself.

- : Every screenshot, every DOM dump and every console log lands in the dev agent's context.

- : 20 minutes of clicking burns Opus tokens on work a cheaper model would do.

- : The dev agent forgets what it was doing two screenshots in.

- : You pay full price for browser clicks, the planet pays full price too.

With agent delegation

- : The dev agent calls run_qa_test and waits.

- : A cheap QA agent does the clicks, the asserts, the screenshot capture.

- : Only the verdict (pass, fail, summary) reaches the dev agent.

- : The QA agent is ephemeral : it dies after submit_verdict, no context bloat.

- : Token bill drops, dev agent stays focused, loop closes on its own.

Agent delegation is the cheapest reliability win you can wire into a coding agent setup.

What an agent delegation call looks like

Here is the entire shape of a dev-to-QA agent delegation. The dev agent fires this through the Test Runner MCP and waits for the response.

MCP tool call (dev agent)

run_qa_test({

scenario: "Open http://localhost:3000/login.\n Type the seeded test user in the email field.\n Submit the form.\n Assert the dashboard URL is reached and the user's name is shown in the header.\n Capture a screenshot on success, capture console logs on failure."

})FAQ

What is agent delegation in AgentsRoom ?

Agent delegation is a dev to QA handoff between two AI coding agents. The dev agent finishes a feature, calls a single MCP tool (run_qa_test), and an ephemeral QA agent runs the test on a different model. The dev agent reads the verdict and moves on. The whole agent delegation flow happens through the AgentsRoom MCP servers.

Why would I want agent delegation at all ?

Three reasons. Money : the QA agent runs on a cheaper model, so test passes cost a fraction of what they would on the dev model. Context : the dev agent stays clean, all the screenshots and DOM dumps die with the QA agent. Reliability : the QA agent has one job, so it tests better than a dev agent multitasking on browser clicks.

Which models work for agent delegation ?

Any model AgentsRoom supports : Claude (Opus, Sonnet, Haiku), Codex (high, mini), Gemini (Pro, Flash), OpenCode, Aider. Agent delegation is cross-provider. A common split is Claude Opus or Codex on the dev side and Claude Haiku or Codex mini on the QA side, but you choose.

Is agent delegation only for browser tests ?

Today, yes, the QA agent drives the AgentsRoom embedded Chromium browser. Tomorrow, the same agent delegation shape covers Electron apps (install the AgentsRoom Electron MCP library in your Electron project) and React Native apps (roadmap, iOS and Android simulators).

How does agent delegation avoid the dev agent doing the test itself ?

AgentsRoom strips the browser_* tools from the dev agent at spawn time. The dev agent literally cannot call browser_navigate or browser_screenshot. The only browser path is run_qa_test, which fires agent delegation. The constraint is mechanical, not a polite request in a prompt.

Is agent delegation cloud or local ?

Local-first. The dev agent, the ephemeral QA agent, the MCP bridge and the browser all run on your machine. Agent delegation only uses the cloud when the underlying model (Claude, Codex, Gemini) talks to its own provider, exactly like a normal agent run.

Does agent delegation save real money ?

Yes, by a meaningful factor for QA-heavy days. A complex end-to-end test on Opus or Codex high vs the same test on Haiku or Codex mini is roughly a 10x cost difference. Agent delegation across a dev day across the team scales that gap fast.

What does the dev agent get back from agent delegation ?

A short structured verdict : pass, fail or inconclusive, with a summary, optional screenshot path and optional console logs. No raw screenshots in context, no DOM dumps. That is the whole point of agent delegation : isolate the QA noise inside the QA agent.

Can the QA agent file a backlog ticket when it fails ?

Yes. Agent delegation gives the QA agent the Backlog MCP. A failure can land as a backlog ticket on the project, with the scenario, screenshot and console logs attached. The dev agent reads the verdict and the backlog ticket carries the long form details.

Where does agent delegation fit relative to the other AgentsRoom features ?

Agent delegation lives on top of Browser Automation (which gives the QA agent the browser) and the AgentsRoom MCP servers (which give every agent its tool surface). Agent Teams is the broader multi-agent workflow editor : agent delegation is the dev to QA flavor of that workflow, but exposed as a single MCP call so any agent in any provider can use it without configuring a graph.

Goes well with

Browser Automation

The Chromium and Browser MCP layer the QA side of agent delegation drives. Real persistent browser per project.

Agent Teams

Visual multi-agent workflow editor. Agent delegation is the dev to QA flavor, Agent Teams is the full graph version with N nodes and feedback loops.

AgentsRoom MCP

The MCP servers that make agent delegation possible : Test Runner, Browser, Backlog, Terminal Commands, Prompt Library.

Multi-Provider

Run Claude, Codex, Gemini, OpenCode and Aider side by side. Agent delegation is the cross-provider angle of the same idea.

Claude Code Token Usage

Live token meter per session. The fastest way to confirm the dollar savings agent delegation gives you in practice.

Public Backlog

When a QA agent fails an agent delegation pass, the bug lands here. Clients and teammates see the regression, the dev agent picks it up.

Stop paying Opus prices for QA clicks

Download AgentsRoom and try agent delegation. Wire your dev agent on the model you trust, your QA agent on a cheaper model, and let the dev to QA handoff happen on its own through MCP.

Companion app: monitor your agents on the go

Bring your own: Claude, Codex, Gemini CLI, or other AI provider.

Push bugs and requests straight to your public backlog.